In the Age of Large Models, Human Attention Matters Even More

“Attention Is All You Need” was originally written for models.

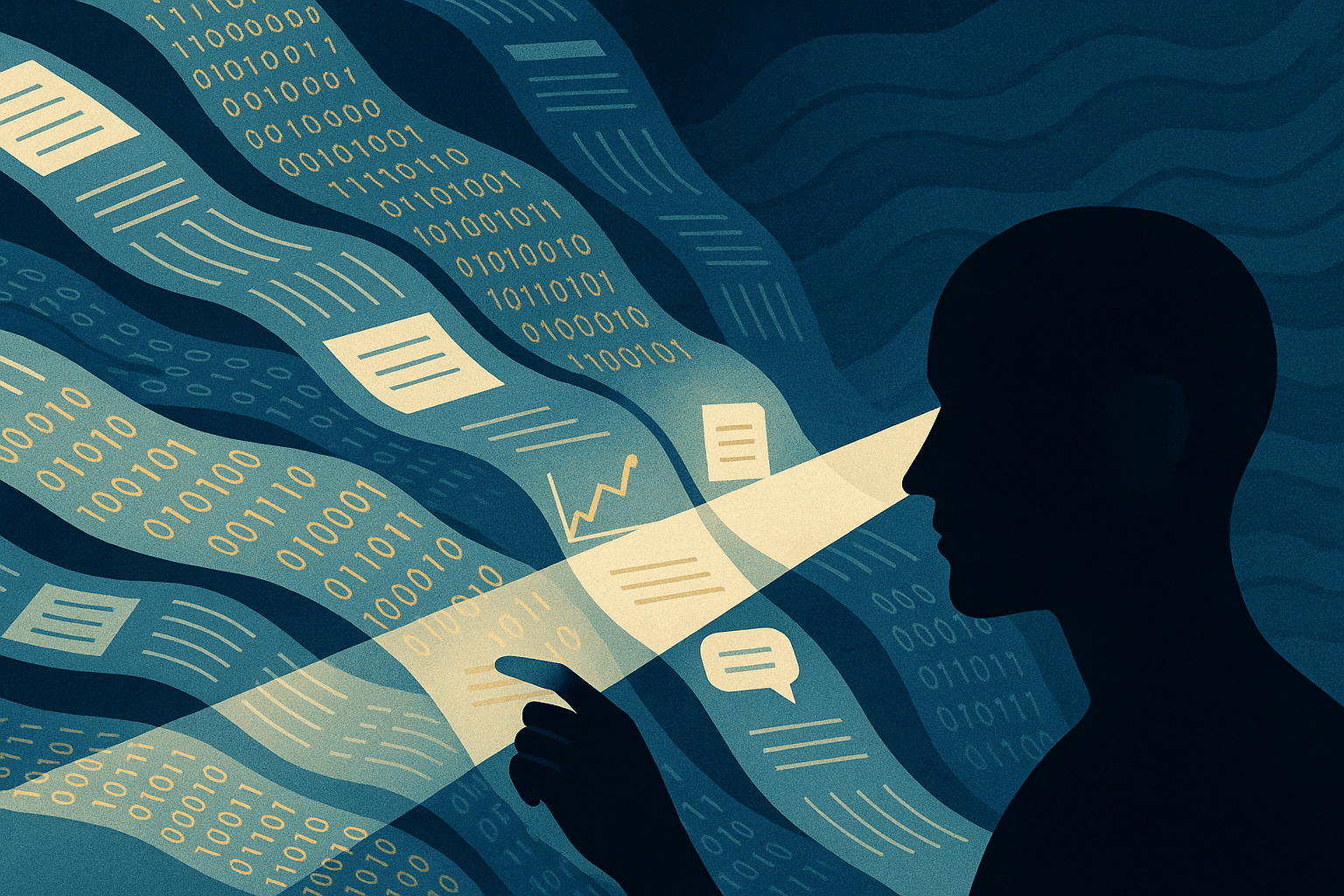

It described the core insight behind transformers: instead of relying on older recurrent structures, a model could use attention to determine which parts of a large input matter more, which relationships are more relevant, and which signals deserve priority. In that sense, attention is one of the key mechanisms through which a model organizes information and builds context.

But in the age of large models, I increasingly feel that the more difficult battlefield of attention is not inside the model. It is inside the human being.

A model’s attention is fundamentally a problem of computation. Human attention is a combined bottleneck of biology, psychology, and time. Models can process huge volumes of input and generate code, scripts, documentation, requirement drafts, testing plans, and analyses at speed. Human beings cannot. We cannot inspect every line of AI-generated output, nor can we preserve the same quality of judgment under a continuous flood of information.

The problem becomes even more subtle because we do not actually want AI to slow down.

When facing a system that can work at machine speed, our instinct is rarely to ask it to do less. We want it to do more: branch more paths, generate more versions, explore more options, run more tasks in parallel, produce more drafts, and open more directions at once. That instinct is natural, because AI’s value lies precisely in its ability to expand the space of possibility.

But once output speed far exceeds human absorption speed, a new bottleneck emerges. The problem is no longer that the model is too weak. The problem is that human attention begins to collapse under the volume of what the model produces. AI becomes the engine of output, while the human becomes the slowest, most fragile, and most overloaded component in the system.

So the real question in the age of large models is no longer only how to make AI generate better outputs. It is how to prevent human attention from being destroyed by the very abundance AI creates.

That means human-AI collaboration cannot simply mean pushing more work onto AI. It requires a redesign of how attention is allocated.

The first principle is this: not every AI output deserves full human reading.

That sounds obvious, but it cuts against old habits. Many people still operate with a pre-AI illusion of work: if something has been generated, it ought to be fully read, fully understood, and fully checked. In the AI era, that is often impossible and frequently unnecessary. What matters is not reading everything. What matters is reading only what is most worth reading. If humans continue to use artisanal review logic against machine-scale output, AI will simply drag them into a new form of busywork.

The second principle is this: humans should not face raw output streams directly; they should first face layered and filtered interfaces.

In other words, AI should not only generate content. It should also organize content. A good collaboration pattern does not throw fifty thousand words, hundreds of file diffs, and a dozen alternative plans directly at a person. It first compresses them into summaries, risk markers, differences, pending decisions, anomalies, and explicitly flagged items that require human confirmation. The real object of attention is not the entire output. It is the set of points most worthy of attention.

The third principle is this: attention must be budgeted.

Companies do capital allocation. Systems do resource scheduling. But most people do almost no attention budgeting when working with AI. That is a serious mistake. Human attention is not infinite. In fact, it is often more scarce than time. The number of hours in which a person can make truly high-quality judgments is limited. So outputs should be tiered by importance and risk: some deserve a quick scan, some a summary review, some sampling and verification, and a few require full human inspection. Not everything deserves full review.

The fourth principle is this: high-risk decisions should remain human-led, while low-risk exploration should be massively delegated to AI.

The worst version of collaboration is misallocation: humans spending their best attention on low-value details while AI is left unsupervised at points of real risk. The better pattern is the opposite. Let AI expand at scale in low-cost experimentation, broad search, and version branching. But let humans retain control over direction, standards, final judgment, and responsibility. Human beings should not compete with AI on volume. They should protect the few critical judgment points that must not be drowned by output.

The fifth principle is this: build interruption protection into the workflow.

One of the deepest weaknesses of human attention is that it collapses when continuously fragmented. The danger in the AI era is not only that there is too much content. It is that signals arrive too frequently. A summary now, a diff a minute later, a new suggestion right after that — the human mind ends up functioning like a notification processor. Over time, the problem is not just more information. It is shallower thinking. What is needed here is not more willpower, but better interruption control: batched review instead of constant interruption, fixed review windows instead of random bombardment, anomaly reporting rather than reporting everything.

That is why human attention becomes more important, not less, in the age of large models. It is not because model attention matters less. It is because stronger models push human attention closer to its true limit. The growth of model capability produces not only efficiency gains, but also a new form of cognitive pressure. AI makes generation cheap. It does not make judgment equally cheap. AI accelerates exploration. It does not upgrade the absorption speed of the human mind.

At a deeper level, the most valuable form of human-AI collaboration may not be “the more AI does, the better.” It may be “the more AI does, the more human attention can be concentrated on the few things that matter most.” The highest form of collaboration is not turning people into proofreaders for machine output. It is turning AI into a pre-filter, risk detector, summarizer, organizer, and amplifier for human attention.

If the breakthrough of transformers was learning how to allocate attention across large volumes of tokens, then the human challenge of the AI era is whether we can relearn how to allocate our own attention. For models, attention is part of intelligence. For humans, it may already be part of survival.

In that sense, in the age of AI, attention is not only all you need.

It is one of the last things you truly own and cannot outsource.